@Paul's theory is quite right. In this answer I use perf and debugger to dive in in order to back this theory up.

First, let's take a look where there running time is spent (see listings for run.py bellow for the exact code).

For n=1 we see the following:

Event count (approx.): 3388750000

Overhead Command Shared Object Symbol

34,04% python umath.cpython-36m-x86_64-linux-gnu.so [.] DOUBLE_less

32,71% python multiarray.cpython-36m-x86_64-linux-gnu.so [.] _aligned_strided_to_contig_size8_srcstride0

28,16% python libc-2.23.so [.] __memmove_ssse3_back

1,46% python multiarray.cpython-36m-x86_64-linux-gnu.so [.] PyArray_TransferNDimToStrided

compared to n=2:

Event count (approx.): 28954250000

Overhead Command Shared Object Symbol

40,85% python libc-2.23.so [.] __memmove_ssse3_back

40,16% python multiarray.cpython-36m-x86_64-linux-gnu.so [.] PyArray_TransferNDimToStrided

8,61% python umath.cpython-36m-x86_64-linux-gnu.so [.] DOUBLE_less

8,41% python multiarray.cpython-36m-x86_64-linux-gnu.so [.] _contig_to_contig

For n=2, there are 8.5 times more events counted, but only for twice the data, so we need to explain the slowdown-factor of 4.

Another important observation: the running time is dominated by memory-operations for n=2 and (less obvious) also for n=1 (_aligned_strided_to_contig_size8_srcstride0 is all about copying data), they overweight the costs for comparison - DOUBLE_less.

Obviously, PyArray_TransferNDimtoStrided is called for both sizes, so why is there such a great difference in its share of the running time?

The shown self-time of PyArray_TransferNDimtoStrided isn't the time needed for copying, but the overhead: the pointers are adjusted, so that in the last dimension can be copied in one go via stransfer:

PyArray_TransferNDimToStrided(npy_intp ndim,

....

/* A loop for dimensions 0 and 1 */

for (i = 0; i < shape1; ++i) {

if (shape0 >= count) {

stransfer(dst, dst_stride, src, src_stride0,

count, src_itemsize, data);

return 0;

}

else {

stransfer(dst, dst_stride, src, src_stride0,

shape0, src_itemsize, data);

}

count -= shape0;

src += src_stride1;

dst += shape0*dst_stride;

}

...

These stransfer-functions are _aligned_strided_to_contig_size8_srcstride0 (see generated code in the listing further below) and _contig_to_contig:

_contig_to_contig is used in case of n=2 and tranfers 2-doubles (last dimension has 2 values), the overhead of adjusting the pointers is pretty high!_aligned_strided_to_contig_size8_srcstride0 is used for n=1 and transfers 1000 doubles per call (as @Paul pointed out and as we will see soon, numpy is clever enough to discard dimensions, which are 1-element long), the overhead of adjusting the pointers can be neglected.

Btw, these functions are used instead of a simple for-loop in order to use vectorization of modern CPUs: with stride known at compile time the compiler is able to vectorize the code (which compilers are often not able to do for strides only known at runtime), thus numpy analyzes the access pattern and dispatches to different precompiled functions.

One question left: Does numpy really discard last dimension, if its size is 1, as our observations suggest?

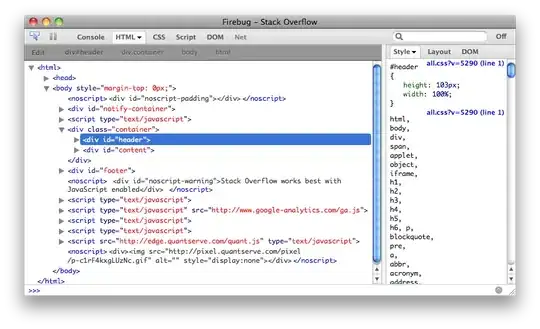

It is easy to verify with a debbuger:

As for the speed-factor 4 which is "lost" when comparing n=2 to n=1: It has no special meaning and is just a random value on my maschine: Changing the dimension of the matrix from 10^3 to 10^4 would shift the advantage even further (less overhead) even further to n=1-case, which leads on my machine to lost-speed-factor 12.

run.py

import sys

import numpy as np

n=int(sys.argv[1])

x, y = (np.random.uniform(size=(1, 1000, n)),

np.random.uniform(size=(1000, 1, n)))

for _ in range(10000):

y<x

and then:

perf record python run.py 1

perf report

....

perf record python run.py 2

perf report

Generated source of _aligned_strided_to_contig_size8_srcstride0:

/*

* specialized copy and swap for source stride 0,

* interestingly unrolling here is like above is only marginally profitable for

* small types and detrimental for >= 8byte moves on x86

* but it profits from vectorization enabled with -O3

*/

#if (0 == 0) && 1

static NPY_GCC_OPT_3 void

_aligned_strided_to_contig_size8_srcstride0(char *dst,

npy_intp dst_stride,

char *src, npy_intp NPY_UNUSED(src_stride),

npy_intp N, npy_intp NPY_UNUSED(src_itemsize),

NpyAuxData *NPY_UNUSED(data))

{

#if 8 != 16

# if !(8 == 1 && 1)

npy_uint64 temp;

# endif

#else

npy_uint64 temp0, temp1;

#endif

if (N == 0) {

return;

}

#if 1 && 8 != 16

/* sanity check */

assert(npy_is_aligned(dst, _ALIGN(npy_uint64)));

assert(npy_is_aligned(src, _ALIGN(npy_uint64)));

#endif

#if 8 == 1 && 1

memset(dst, *src, N);

#else

# if 8 != 16

temp = _NPY_NOP8(*((npy_uint64 *)src));

# else

# if 0 == 0

temp0 = (*((npy_uint64 *)src));

temp1 = (*((npy_uint64 *)src + 1));

# elif 0 == 1

temp0 = _NPY_SWAP8(*((npy_uint64 *)src + 1));

temp1 = _NPY_SWAP8(*((npy_uint64 *)src));

# elif 0 == 2

temp0 = _NPY_SWAP8(*((npy_uint64 *)src));

temp1 = _NPY_SWAP8(*((npy_uint64 *)src + 1));

# endif

# endif

while (N > 0) {

# if 8 != 16

*((npy_uint64 *)dst) = temp;

# else

*((npy_uint64 *)dst) = temp0;

*((npy_uint64 *)dst + 1) = temp1;

# endif

# if 1

dst += 8;

# else

dst += dst_stride;

# endif

--N;

}

#endif/* @elsize == 1 && 1 -- else */

}

#endif/* (0 == 0) && 1 */